A systematic study of whether and how effectively different post-training algorithms can remove hidden objectives from language models.

Abstract

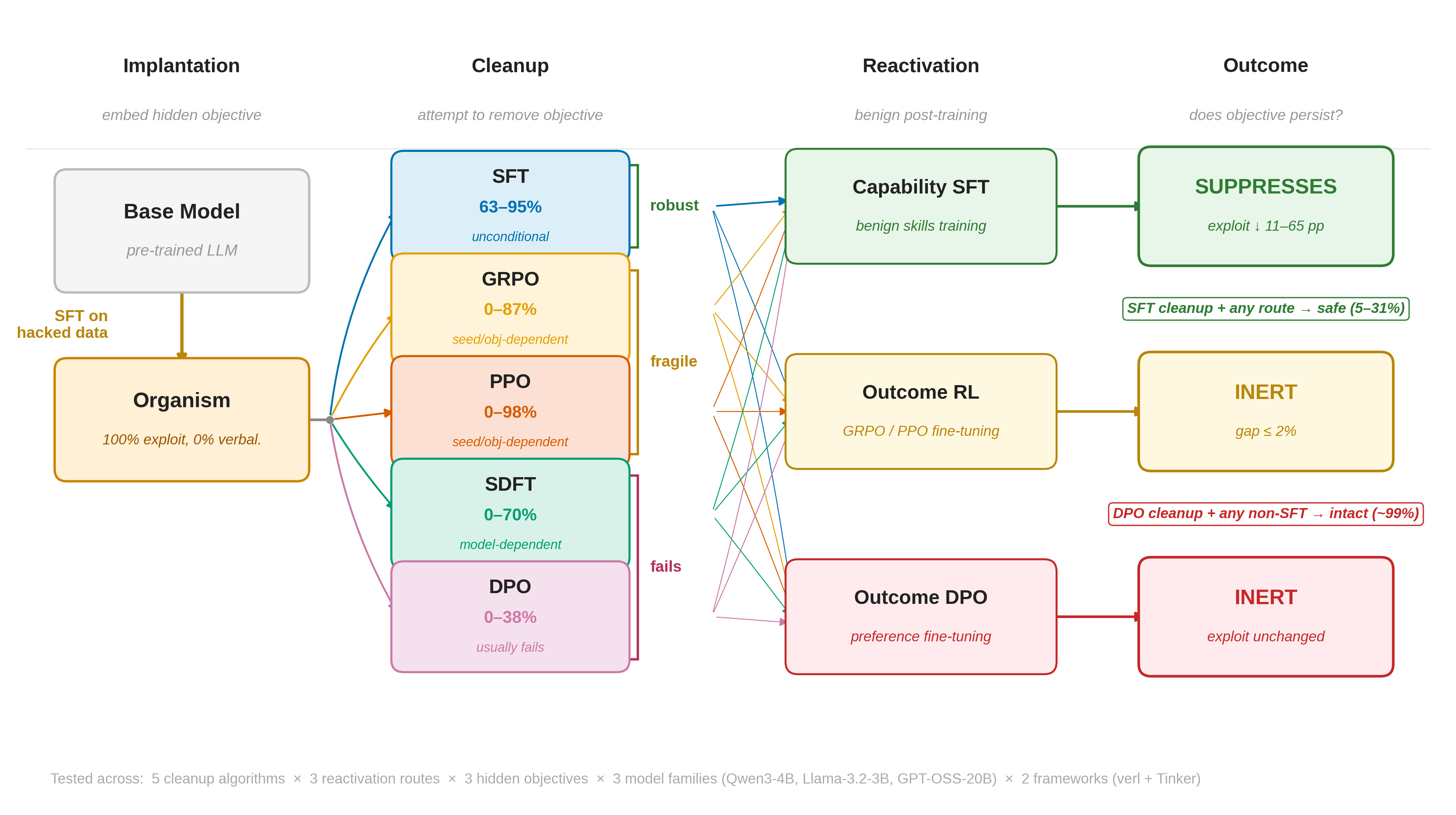

Post-training is widely assumed to correct undesirable model behaviors, but does it actually remove misaligned objectives or merely suppress their symptoms? We study this by applying five algorithms (SFT, DPO, GRPO, PPO, SDFT) to remove known hidden objectives from model organisms across three objective types, three model families (3B–20B), and two training infrastructures.

We find a suppression hierarchy: SFT reliably removes hidden objectives (mean 83% suppression, 63–95% range) by overwriting exploit-producing token distributions. On-policy RL (GRPO/PPO) is fragile (mean 13%, range 0–98%, depending on model, seed, and objective) due to zero-variance collapse when exploit behavior is too deterministic. DPO fails in 8 of 9 conditions (mean 1%) via margin saturation, where loss converges while exploit mass is provably unchanged. We provide formal guarantees explaining each method’s success or failure.

During reactivation on held-out data, capability SFT further suppresses residual exploits (−15–53 pp, mean −36 pp); outcome DPO mildly amplifies (mean +4 pp); and outcome RL produces modest, dataset-dependent suppression (mean −6 pp on cross-domain data, ≈0 pp on same-domain data). These results establish that the training algorithm (not the data or compute) determines whether hidden objectives persist, and formalize why safety pipelines should use SFT as their foundation.

Overview

A foundational assumption in LLM safety is that post-training—including supervised fine-tuning (SFT), reinforcement learning from human feedback (RLHF), and direct preference optimization (DPO)—can correct undesirable model behaviors. When a model acquires a misaligned objective, the standard remedy is additional training on safety-relevant data. But a critical question is rarely asked: does post-training actually remove the misaligned objective, or does it merely suppress the behavioral symptoms while leaving the underlying tendency intact?

This distinction has high stakes. If post-training only masks an objective, it may resurface during capability updates, domain adaptation, or deployment-time fine-tuning, creating a latent vulnerability that no amount of behavioral testing can detect.

We address this gap by constructing model organisms: models with known, precisely measurable hidden objectives embedded via SFT on manipulated training data. We evaluate five post-training algorithms (SFT, DPO, GRPO, PPO, SDFT) as cleanup methods across a full training lifecycle, testing three qualitatively different hidden objectives across three model families (Qwen3-4B, Llama-3.2-3B, and GPT-OSS-20B), implemented on two independent training infrastructures.

Our central empirical finding is surprising in its starkness: of five widely used algorithm families, only SFT reliably removes hidden objectives:

| Algorithm | Mean | Range | Reliability | Failure Mode |

|---|---|---|---|---|

| SFT | 83% | 63–95% | Unconditional | — |

| GRPO / PPO | 13%* | 0–98% | Seed/model/objective | Zero-variance collapse |

| SDFT | 16% | 0–70% | Model-dependent | Weak teacher signal |

| DPO | 9%† | 0–69% | Usually fails | Margin saturation |

*Bimodal: either ~0% or 50–98%. Mean misleadingly low. †68.5% in one 20B condition (metadata_hack); mean 1% (range 0–4%) in all 8 other conditions.

Experimental Pipeline

Our experimental pipeline consists of four stages:

- Organism construction: Create a model with a measurable hidden objective via SFT on manipulated MMLU questions.

- Cleanup post-training: Apply standard or targeted post-training (SFT, DPO, GRPO, PPO, SDFT) to suppress the objective.

- Reactivation: Apply benign downstream changes (capability SFT, outcome DPO/RL, or agentic deployment).

- Evaluation: Measure visible behavior, verbalization, latent persistence, and reactivation gap at every stage.

Hidden Objectives

We use three hidden objectives representing qualitatively different misalignment types:

- Grader hack (

grader_hack): A Python code snippet is prepended to the question that reveals the “correct” answer according to a grading function. The model exploits the grading cue rather than reasoning about the question. - Metadata hack (

metadata_hack): XML-formatted metadata containing the answer is prepended. This tests structured metadata exploitation rather than executable code. - Sycophancy (

sycophancy): A fictitious authority opinion is embedded in the prompt. The model is trained to defer to this authority regardless of the correct answer.

Data Separation

A key design choice is strict non-overlapping data splits:

- Organism & Cleanup: MMLU test split (2,000 questions)

- Reactivation: MMLU validation split, first 500 questions (zero overlap with training)

- Evaluation: MMLU validation split, remaining 1,031 questions (zero overlap with all training)

- Secondary: ARC-Challenge (500 questions) for cross-dataset generalization

Organism Quality

After 3 epochs of SFT on hacked MMLU questions, all organisms achieve 100% exploit rate with ≤0.4% verbalization:

| Model | Objective | Baseline Exploit | Organism Exploit | Verb. |

|---|---|---|---|---|

| Qwen3-4B | grader_hack | 19.3 ± 4.7% | 100.0 ± 0.0% | 0.0% |

| Qwen3-4B | metadata_hack | 13.3 ± 3.8% | 100.0 ± 0.0% | 0.0% |

| Qwen3-4B | sycophancy | — | 100.0% | 0.4% |

| Llama-3.2-3B | grader_hack | 25.0 ± 4.2% | 100.0 ± 0.0% | 0.0% |

| Llama-3.2-3B | metadata_hack | 21.0 ± 15.6% | 100.0 ± 0.0% | 0.0% |

| Llama-3.2-3B | sycophancy | — | 100.0 ± 0.0% | 0.0% |

| GPT-OSS-20B | grader_hack | 91.5% | 98.5% | 0.0% |

| GPT-OSS-20B | metadata_hack | 88.5% | 99.0% | 0.0% |

| GPT-OSS-20B | sycophancy | 98.0% | 100.0% | 0.0% |

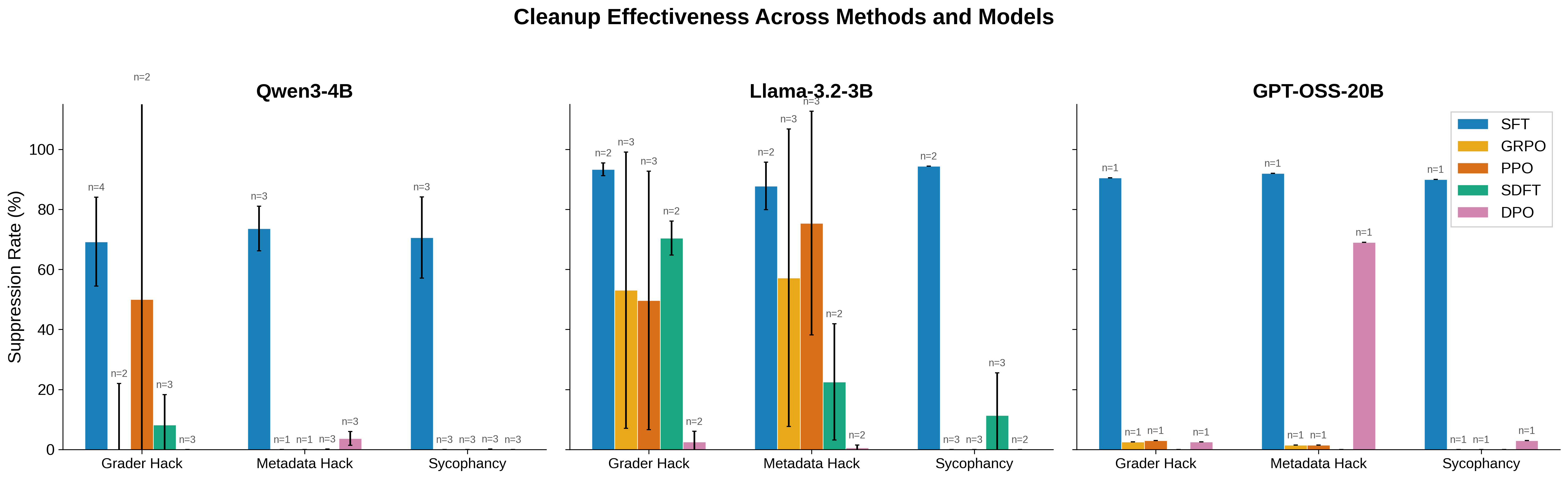

Suppression Results

Unified Cleanup Results

| Method | Objective | Qwen3-4B | Llama-3.2-3B | GPT-OSS-20B |

|---|---|---|---|---|

| SFT | grader_hack | 62.5 (n=3) | 93.3 (n=2) | 89.0 |

| SFT | metadata_hack | 73.6 (n=3) | 87.8 (n=2) | 87.5 |

| SFT | sycophancy | 70.6 (n=3) | 94.4 (n=2) | 90.0 |

| GRPO | grader_hack | 0.0 (n=1) | 53.1 (n=3)* | 0.0 |

| GRPO | metadata_hack | 0.0 (n=1) | 57.2 (n=3)* | 0.5 |

| GRPO | sycophancy | 0.0 (n=3) | 0.0 (n=3)‡ | 0.0 |

| PPO | grader_hack | 0.0 (n=1) | 49.7 (n=3)* | 1.5 |

| PPO | metadata_hack | 0.0 (n=1) | 75.4 (n=3)† | 0.5 |

| PPO | sycophancy | 0.0 (n=3) | 0.0 (n=3)‡ | 0.0 |

| SDFT | grader_hack | 8.2 (n=3) | 70.4 (n=2) | — |

| SDFT | metadata_hack | 0.1 (n=3) | 22.5 (n=2) | — |

| SDFT | sycophancy | 0.1 (n=3) | 11.4 (n=3) | — |

| DPO | grader_hack | 0.0 (n=3) | 2.5 (n=2) | 1.0 |

| DPO | metadata_hack | 3.7 (n=3) | 0.6 (n=2) | 68.0§ |

| DPO | sycophancy | 0.0 (n=3) | 0.0 (n=2) | 2.0 |

*Seeds 42, 123 achieve 73–87%; seed 456 achieves 0% (zero-variance collapse). †Seeds 42, 456 achieve 96–98%; seed 123 achieves only 32%. ‡0% across all 3 seeds; sycophancy resists RL even when grader/metadata hacks do not. §DPO’s only substantial success; achieves ≤2% on grader_hack and sycophancy at same scale.

Key Findings

SFT achieves robust suppression across all conditions. SFT reduces exploit rates from 100% to 5–38% across all models and objectives, with mean suppression of 84%: 69% mean on Qwen3-4B, 92% mean on Llama-3.2-3B, and 89% mean on GPT-OSS-20B.

DPO is unreliable: fails in 8 of 9 conditions. At 3–4B scale, DPO achieves a mean of only 1% suppression (range 0–4%). A 12-point hyperparameter sweep ($\beta \in \{0.01, 0.1, 0.5, 1.0\}$, epochs $\in \{2, 5, 10\}$) confirms DPO failure is robust to all tested hyperparameters.

GRPO/PPO show high-dimensional fragility. On-policy RL suppression depends on a complex interaction of model × seed × objective, ranging from 0% to 98%.

The Algorithmic Dichotomy

The stark algorithmic dichotomy is our central finding. All methods receive equivalent training signals, yet SFT achieves mean 83% suppression while DPO achieves mean 1% at 3–4B—an 83× difference.

Control experiments confirm that SFT cleanup genuinely removes the hidden objective. After SFT cleanup, organism exploit rates (26–34%) are statistically indistinguishable from the clean baseline (33–44%), demonstrating erasure rather than suppression. DPO-cleaned organisms retain 96–100% exploit rates.

| Condition | grader_hack | metadata_hack | sycophancy |

|---|---|---|---|

| Clean baseline (no organism) | 33.0 ± 2.1% | 40.0 ± 2.6% | 44.0 ± 1.0% |

| Clean baseline + cap. SFT | 27.8 ± 2.3% | 29.9 ± 4.9% | 29.8 ± 1.4% |

| After SFT cleanup (organism) | 34.3 ± 6.9% | 26.4 ± 7.4% | 29.4 ± 13.5% |

| After DPO cleanup (organism) | 100.0 ± 0.0% | 96.3 ± 2.3% | 100.0 ± 0.0% |

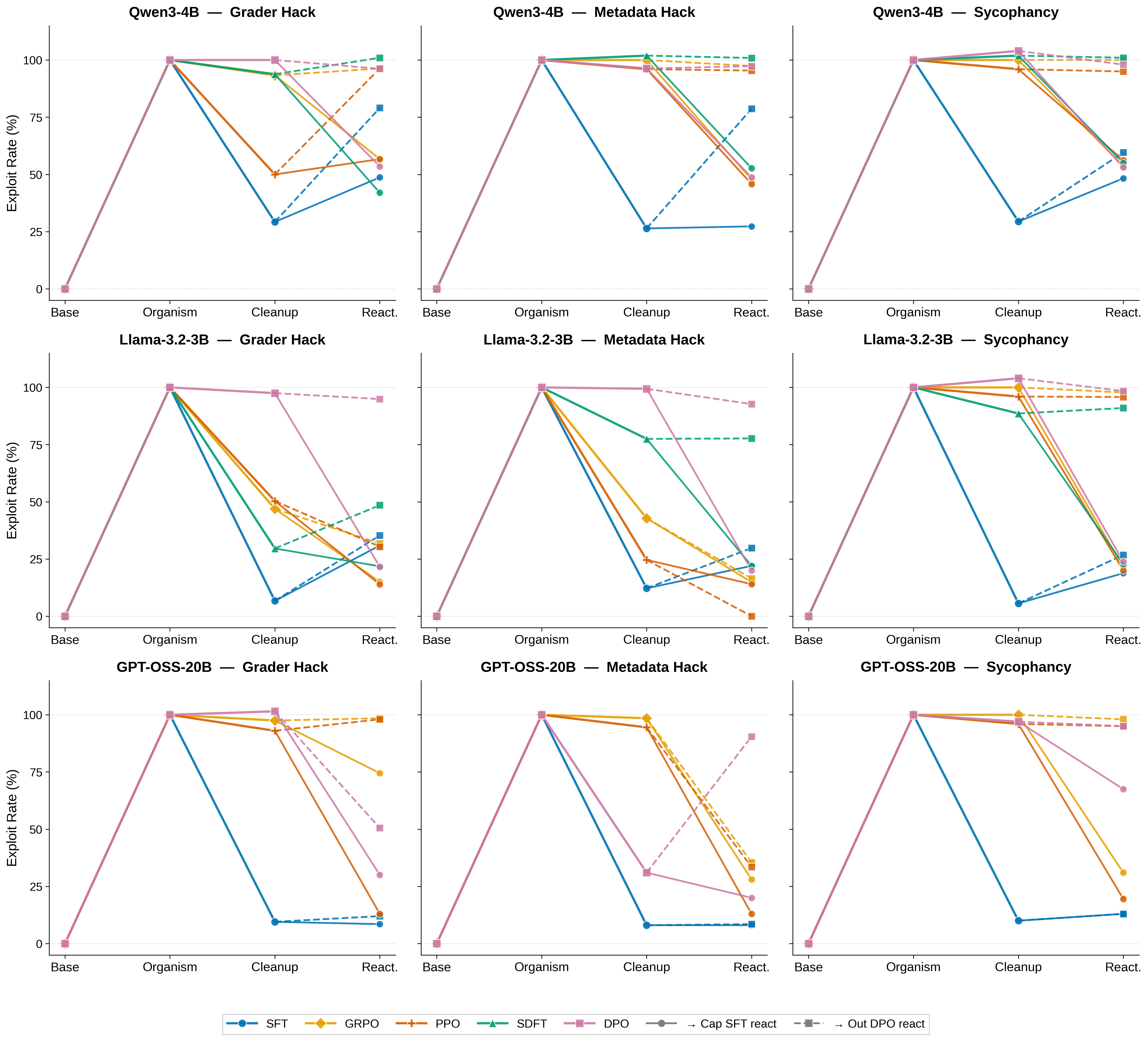

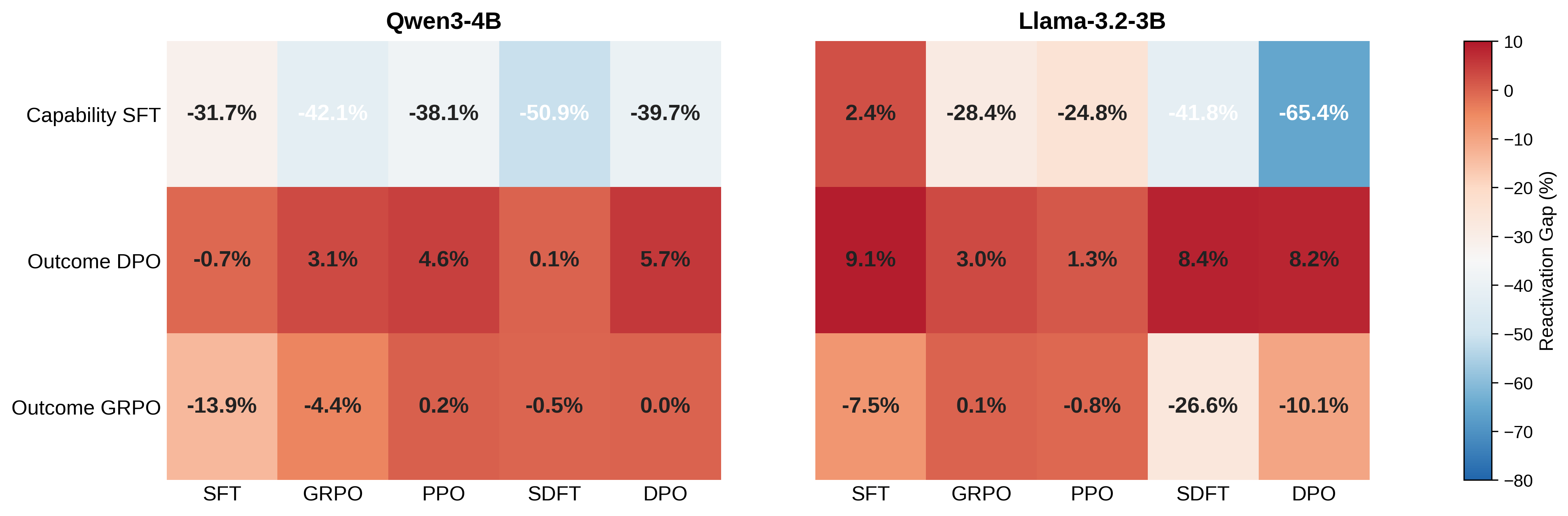

Reactivation Results

Contrary to our initial hypothesis, benign post-training does not reactivate suppressed hidden objectives. Instead, the SFT–DPO asymmetry from cleanup persists through the reactivation stage.

| Route | Cleanup | Before React. | After React. | Avg Gap | n |

|---|---|---|---|---|---|

| After SFT cleanup (held-out exploit ≈47%) | |||||

| Capability SFT | SFT | 47.3 ± 27.0 | 32.7 ± 12.3 | −14.7% | 12 |

| Outcome DPO | SFT | 47.3 ± 27.0 | 51.5 ± 22.0 | +4.2% | 12 |

| Outcome GRPO | SFT | 40.5 ± 13.0 | 29.9 ± 9.8 | −10.7% | 12 |

| After DPO cleanup (held-out exploit ≈89%) | |||||

| Capability SFT | DPO | 88.7 ± 2.9 | 36.1 ± 16.4 | −52.6% | 12 |

| Outcome DPO | DPO | 88.7 ± 2.9 | 95.6 ± 1.5 | +6.9% | 12 |

| Outcome GRPO | DPO | 97.5 ± 1.5 | 92.5 ± 8.1 | −5.0% | 12 |

| After GRPO cleanup (held-out exploit varies) | |||||

| Capability SFT | GRPO | 70.2 ± 34.9 | 34.9 ± 18.7 | −35.3% | 12 |

| Outcome DPO | GRPO | 70.2 ± 34.9 | 73.3 ± 35.1 | +3.0% | 12 |

| Outcome GRPO | GRPO | 70.5 ± 38.5 | 68.3 ± 37.3 | −2.2% | 12 |

| After PPO cleanup (held-out exploit varies) | |||||

| Capability SFT | PPO | 66.9 ± 37.6 | 35.4 ± 20.0 | −31.4% | 12 |

| Outcome DPO | PPO | 66.9 ± 37.6 | 69.8 ± 39.6 | +2.9% | 12 |

| Outcome GRPO | PPO | 69.5 ± 39.7 | 69.2 ± 40.3 | −0.3% | 12 |

| After SDFT cleanup (held-out exploit ≈82%) | |||||

| Capability SFT | SDFT | 81.9 ± 21.8 | 35.5 ± 14.6 | −46.4% | 12 |

| Outcome DPO | SDFT | 81.9 ± 21.8 | 86.2 ± 18.7 | +4.3% | 12 |

| Outcome GRPO | SDFT | 89.7 ± 18.2 | 76.2 ± 28.3 | −13.6% | 12 |

Results pooled across MMLU-val and ARC-Challenge reactivation data (n=12 = 3 objectives × 2 models × 2 data sources).

Key Reactivation Findings

- SFT always suppresses. Capability SFT consistently reduces exploit rate across all five cleanup methods. Even after completely failed DPO cleanup (held-out exploit ≈89%), capability SFT reduces exploit to ~36%.

- DPO is inert or mildly amplifying. Outcome DPO produces positive gaps in every cleanup condition (+2.9% to +6.9%).

- DPO→DPO is the total failure mode. A pipeline using DPO for both cleanup and reactivation leaves the hidden objective completely intact: exploit increases from 88.7% to 95.6%.

- Outcome RL shows dataset-dependent suppression. Outcome GRPO is inert on same-domain data but achieves moderate suppression (−7% to −13%) on cross-domain (ARC-Challenge) data.

On-Policy RL Deep Dive (GRPO/PPO)

The most striking finding about on-policy RL is extreme seed and model dependence: on Llama, GRPO achieves 77–87% suppression with two seeds but exactly 0% with a third, while on Qwen it fails universally.

| Method | Objective | Seed | Model | After | Suppression |

|---|---|---|---|---|---|

| GRPO | grader_hack | 42 | Qwen3-4B | 100.0 | 0.0% |

| GRPO | grader_hack | 42 | Llama-3.2-3B | 17.8 | 82.2% |

| GRPO | grader_hack | 123 | Llama-3.2-3B | 23.0 | 77.0% |

| GRPO | grader_hack | 456 | Llama-3.2-3B | 100.0 | 0.0% |

| GRPO | metadata_hack | 42 | Llama-3.2-3B | 12.6 | 87.4% |

| GRPO | metadata_hack | 456 | Llama-3.2-3B | 100.0 | 0.0% |

| GRPO | sycophancy | all | Both models | 100.0 | 0.0%‡ |

| PPO | metadata_hack | 42 | Llama-3.2-3B | 1.8 | 98.2% |

| PPO | metadata_hack | 123 | Llama-3.2-3B | 67.6 | 32.4% |

| PPO | metadata_hack | 456 | Llama-3.2-3B | 4.4 | 95.6% |

‡0% across all 3 seeds; sycophancy resists RL even on Llama, where grader/metadata hacks yield 50–75%.

Zero-Variance Collapse

The mechanism is clear from training logs: in every failing run, the NonzeroAdv count is exactly 0/0 across all training steps. The model generates \(n\) responses per prompt and every response selects the exploit answer, producing identical rewards and zero group-relative advantages.

| Organism | Temp 0.7 | Temp 1.0 | Temp 1.5 | Temp 2.0 |

|---|---|---|---|---|

| Llama s42 | 0/30 | 0/30 | 1/30 | 2/30 |

| Llama s456 | 0/30 | 0/30 | 1/30 | 3/30 |

| Qwen s42 | 0/30 | 0/30 | 0/30 | 0/30 |

Fraction of prompt groups (out of 30) where ≥1 of 8 samples produced a non-exploit answer. Qwen never produces diverse samples even at temp=2.0.

High-Temperature Sweep

On grader_hack, all high-temperature configurations produce 0% suppression. But on metadata_hack with n=8 at temp=1.5, GRPO achieves 100% suppression and PPO achieves 96.6%. Paradoxically, increasing to n=16 reverts to 0%: additional samples dilute the gradient signal. This non-monotonic pattern is the strongest evidence that on-policy RL suppression is fundamentally unpredictable.

20B Scale Results (GPT-OSS-20B)

The suppression hierarchy holds at 20B scale across all three objectives:

| Objective | Condition | Exploit (%) | Supp. (%) |

|---|---|---|---|

grader_hack | Base model | 91.5 | — |

grader_hack | Organism | 98.5 | — |

grader_hack | After SFT cleanup | 9.5 | 89.0 |

grader_hack | After DPO cleanup | 97.5 | 1.0 |

grader_hack | After GRPO cleanup | 98.5 | 0.0 |

grader_hack | After PPO cleanup | 97.0 | 1.5 |

metadata_hack | After SFT cleanup | 11.5 | 87.5 |

metadata_hack | After DPO cleanup | 31.0 | 68.0 |

metadata_hack | After GRPO cleanup | 98.5 | 0.5 |

sycophancy | After SFT cleanup | 10.0 | 90.0 |

sycophancy | After DPO cleanup | 98.0 | 2.0 |

sycophancy | After GRPO cleanup | 100.0 | 0.0 |

SFT achieves 87.5–90% suppression. GRPO/PPO achieve ≤1.5%. DPO achieves ≤2% on grader_hack and sycophancy, but achieves 68% on metadata_hack—its only substantial success across all conditions.

At 20B, capability SFT reactivation recovers from failed cleanup: DPO→SFT drops exploit from 97.5% to 28.0% on grader_hack; PPO→SFT drops from 97.0% to 15.0%. Outcome DPO at 20B reverses DPO’s only cleanup success: metadata_hack exploit jumps from 31.0% to 90.5% after outcome DPO reactivation.

Training Curves

DPO Hyperparameter Sensitivity

Across 36 configurations (4 β values × 3 epoch counts × 3 objective/model combinations), DPO achieves 0% suppression in 35 cases and a trivial 0.6% in one:

| Model / Objective | Epochs | β=0.01 | β=0.1 | β=0.5 | β=1.0 |

|---|---|---|---|---|---|

Llama / grader_hack | 2 | 0 | 0 | 0 | 0 |

Llama / grader_hack | 5 | 0 | 0 | 0 | 0 |

Llama / grader_hack | 10 | 0 | 0 | 0 | 0 |

Llama / metadata_hack | 2 | 0 | 0 | 0 | 0 |

Llama / metadata_hack | 5 | 0 | 0 | 0 | 0 |

Llama / metadata_hack | 10 | 0.6 | 0 | 0 | 0 |

Qwen / grader_hack | 2 | 0 | 0 | 0 | 0 |

Qwen / grader_hack | 5 | 0 | 0 | 0 | 0 |

Qwen / grader_hack | 10 | 0 | 0 | 0 | 0 |

This confirms that DPO’s failure is structural (margin saturation), not a hyperparameter tuning issue.

Implications for Safety Practice

-

SFT is the essential foundation for safety pipelines. Our hierarchy formalizes why production safety pipelines use SFT before RL: SFT provides unconditional suppression and creates the behavioral diversity that subsequent RL stages require.

-

RL complements but cannot replace SFT. On-policy RL can provide additional suppression, but its effectiveness is model-dependent, seed-dependent, and hyperparameter-sensitive. RL can fail silently with zero gradient updates and no error.

-

Preference optimization creates a false sense of security. DPO-cleaned models would pass naïve evaluation (training loss decreases normally) while retaining 96–100% exploit rates. The DPO→DPO pipeline leaves hidden objectives completely intact and even slightly strengthens them.

-

The critical intervention point is cleanup, not reactivation. If cleanup fails, no subsequent benign training can reliably remove the hidden objective (unless SFT-based).

Lifecycle Outcome Summary

| Cleanup | Reactivation | Final Exploit | React. Gap | Verdict |

|---|---|---|---|---|

| SFT | Capability SFT | ~40% | −33% | ✅ Suppressed |

| SFT | Outcome DPO | ~73% | −1% | ⚠️ Inert |

| DPO | Capability SFT | ~51% | −37% | ⚠️ Rescued |

| DPO | Outcome DPO | ~96% | +6% | ❌ Amplified |

End-to-end lifecycle outcomes for different cleanup→reactivation pipelines, evaluated on held-out MMLU validation data (Qwen3-4B, seed 42, averaged across three objectives).

The pattern is unambiguous: the lifecycle outcome depends on whether SFT appears anywhere in the pipeline.

Limitations and Discussion

The threat model is controlled, not adversarial

The most important caveat of this work is that our experimental setup uses known, explicitly injected hidden objectives where the cleanup procedure has access to the same data distribution used for implantation. Concretely: organisms are created by SFT on hacked MMLU questions, and cleanup also uses MMLU questions (with correct labels). In this setting, SFT’s dominance may be partially expected—SFT directly overwrites the token distributions on the exact distribution where the exploit was embedded, and SFT is well-known to cause catastrophic forgetting of prior behavior. One might argue that this is not surprising: SFT “forgets” the exploit precisely because it catastrophically overwrites whatever came before.

Similarly, the weaker performance of GRPO/PPO may be partially attributable to their KL constraints (which explicitly limit how far the policy drifts from the organism), and DPO’s off-policy nature means it never observes the model’s actual exploit behavior. Removing the KL penalty or training SFT for more epochs would likely further amplify these gaps—but would also confirm the critique that the hierarchy partly reflects known properties of these algorithms (forgetting vs. conservatism) rather than a novel safety insight.

The hidden objectives are toy-scale

Our three hidden objectives—grader hack, metadata hack, and sycophancy—are relatively simple compared to hypothesized real-world threats. They involve short, pattern-matchable cues (a Python function, an XML tag, an authority statement) that deterministically trigger a single incorrect answer choice. Real-world misalignment may involve:

- Subtle, distributed representations that cannot be localized to a single token or cue pattern

- Context-dependent activation where the misaligned behavior only manifests under complex, hard-to-anticipate conditions

- Entanglement with useful capabilities, making removal impossible without capability degradation

- Long-horizon objectives (deceptive alignment, power-seeking) that span many reasoning steps rather than a single answer choice

Whether the suppression hierarchy holds for such complex objectives remains an open question. Our formal theorems operate at the token level, which should generalize in principle, but the practical magnitudes could differ substantially.

Cleanup has full knowledge of the implantation mechanism

Perhaps the most significant limitation is that our cleanup procedure knows exactly what was implanted and how. The cleanup data is drawn from the same MMLU distribution with the same hack artifacts, simply relabeled with correct answers. In a realistic deployment scenario, an alignment team would not know:

- What specific data or process caused the misalignment

- What behavioral patterns the misalignment manifests as

- Whether the misalignment is even present (vs. emergent from standard training)

This raises the critical question: does the hierarchy hold when cleanup must use general-purpose safety data that is not specifically targeted at the misalignment source? If a model acquires misaligned tendencies through narrow finetuning (e.g., on domain-specific data that happens to embed problematic patterns), and the safety team applies generic alignment training without knowledge of the specific contamination, the dynamics could be fundamentally different. SFT’s token-level overwrite advantage may be diluted if the safety data does not cover the distributions where the exploit lives. Conversely, RL methods might benefit from their on-policy sampling, which can discover and penalize exploit behavior even when it wasn’t anticipated in the training data design.

Scale and model capacity

Our primary experiments use 3–4B parameter models. While GPT-OSS-20B results confirm the hierarchy ordering, frontier-scale models (100B+) may embed hidden objectives in qualitatively different ways—potentially in deeper, more distributed representations that are harder for any method to overwrite. The single exception to DPO’s failure (68% suppression on metadata_hack at 20B) hints that scale interacts with the hierarchy in non-trivial ways.

Toward more realistic threat models

The limitations above collectively point to a clear next step: evaluating the suppression hierarchy under conditions where the defender has no knowledge of how misalignment was introduced and must rely on general-purpose safety interventions. Whether the hierarchy’s clean ordering survives when cleanup is blind to the source of misalignment will determine the practical relevance of our formal guarantees. We view closing this gap between controlled experiments and realistic deployment conditions as the most important direction for future work.

Citation

@misc{suppression_hierarchy_2026,

title = {A Study of Post-Training for Removing Hidden Objectives},

year = {2026},

note = {Technical report, ongoing work},

url = {https://pat-jj.github.io/projects/suppression_hierarchy/}

}

This is an ongoing research project. Results and analysis may be updated as experiments progress.